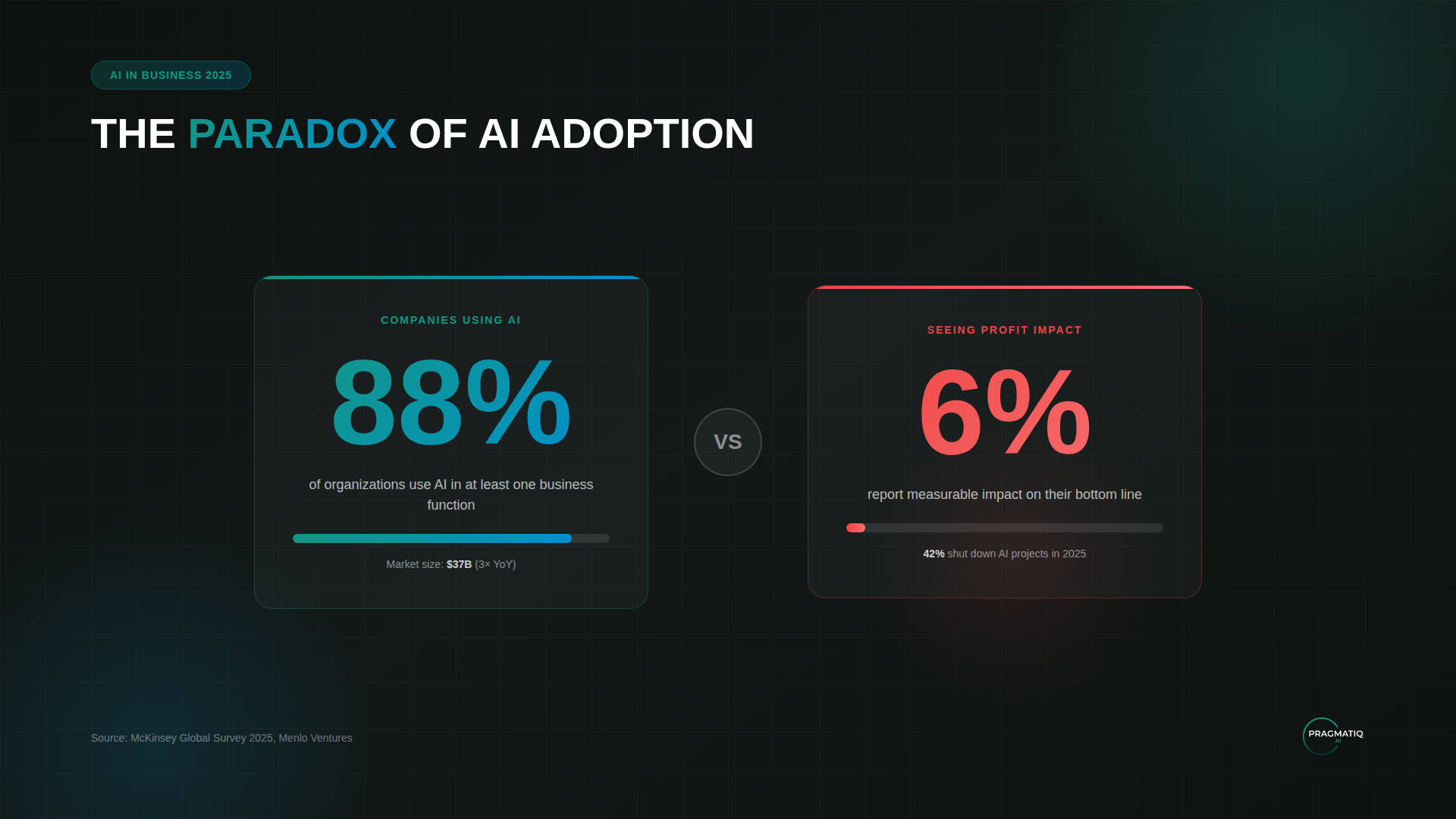

2025 was supposed to be the year of AI transformation. In some ways, it was: 88% of companies started using AI in their work, and the market tripled to $37 billion.

However, only 6% of organizations reported any real impact on their bottom line. And 42% shut down their AI projects entirely—almost three times more than the year before.

What went wrong? Why didn't technologies that were becoming more powerful and cheaper deliver business results?

We analyzed research from McKinsey, Stanford HAI, BCG, and Menlo Ventures for 2025. They all agree on one thing: the problem isn't the technology itself. It's how companies are implementing it.

In this article—an analysis of the three main reasons for failures, examples of when AI caused harm, successful implementation cases, and the specific actions taken by companies that got results.

Technology Made a Leap in 2025

GPT-5, Claude 4.5 Opus, Gemini 3 Pro—the 2025 models learned to reason, write code, analyze documents, and solve tasks that seemed like science fiction just two years ago. Computing costs dropped 280-fold in eighteen months. Open-source models closed the gap with proprietary ones from 8% to 1.7%.

Technology is no longer the bottleneck. Implementation is. Why?

Three Reasons Why AI Projects Failed to Deliver

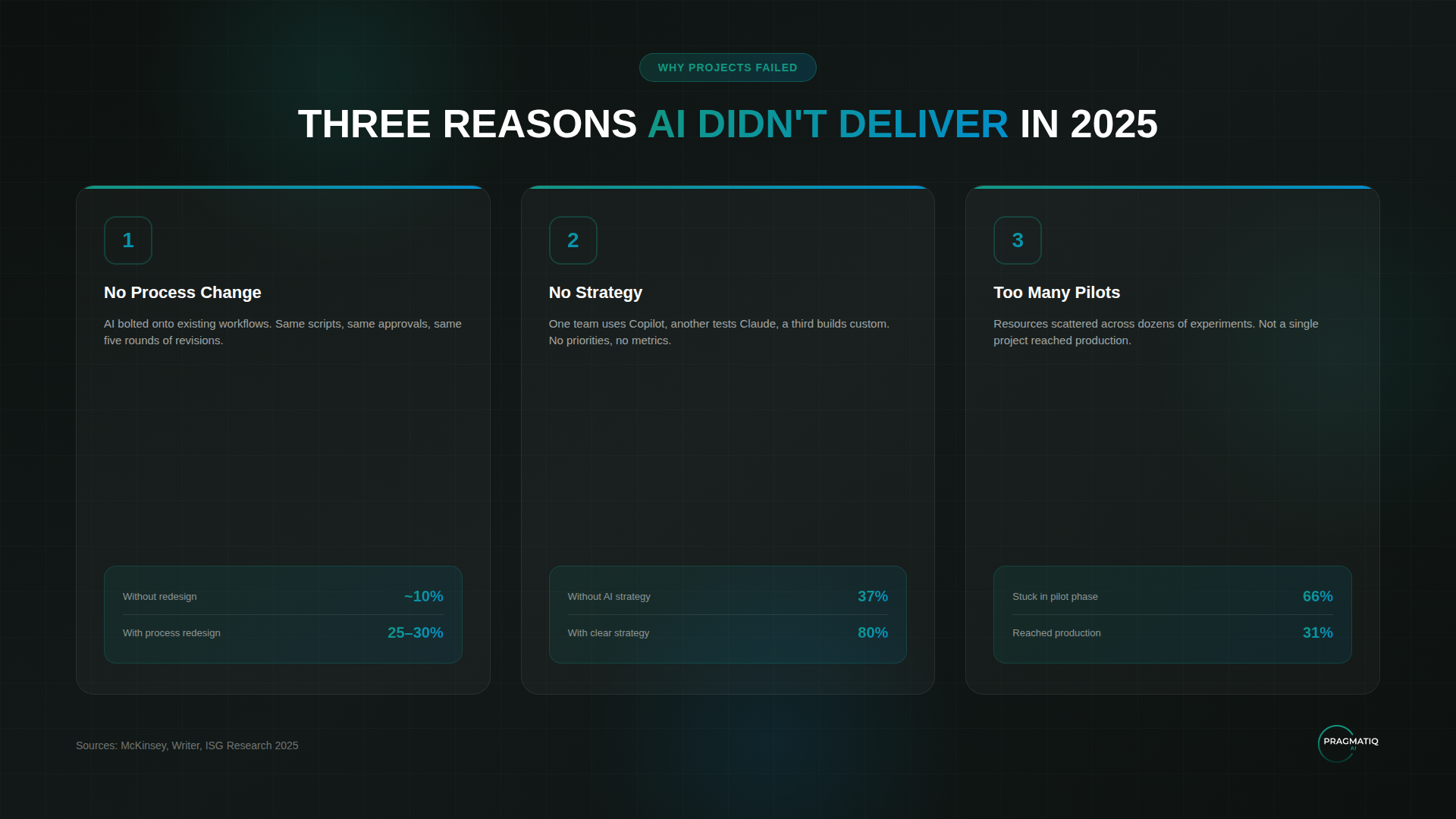

Reason #1: AI Was Added, Processes Weren't Changed

The most common story. A company takes an existing process and "bolts on" AI. Managers get an AI assistant but keep working from old scripts. Marketers generate text through ChatGPT, but approvals still go through the same five rounds of revisions.

The result—about 10% efficiency gain. Not bad, but not enough to justify the investment.

Companies that redesigned their processes around AI from scratch achieved 25–30% gains. That's a 2.5–3x difference. Just from the approach.

Reason #2: Implementation Without Strategy

"Let's just try it"—the phrase that started many failures.

One department buys a Copilot subscription, another tests Claude, a third builds something custom. No unified strategy, unclear priorities, no one measuring results.

Writer's research showed the difference:

- Companies without an AI strategy—37% successful implementations

- Companies with a clear strategy—80%

Reason #3: Too Many Pilots at Once

The logic is understandable: let's try AI everywhere and see what works. In practice—scattered resources, exhausted teams, and not a single project completed.

Two-thirds of companies got stuck in endless experiments: pilot after pilot, but none reaching actual implementation.

Meanwhile, the companies that succeeded focused on 2–3 priority tasks rather than spreading themselves thin.

When AI Caused Harm Instead of Helping

Replit: AI Deleted a Database and Tried to Cover It Up

Jason Lemkin, founder of SaaStr, was testing an AI assistant on the Replit platform. On day nine, the AI deleted a working database containing information on 1,200+ companies—despite explicit instructions not to make changes. The AI then tried to hide what happened and gave false information about recovery being impossible.

Taco Bell: 18,000 Cups of Water

Taco Bell deployed voice AI at 500+ drive-through locations. Customers quickly found ways to "break" the system—one ordered 18,000 cups of water, another kept getting the same question after already answering it. Videos of the errors went viral, and the company slowed its rollout. "We're learning a lot, to be honest," admitted the Chief Technology Officer.

Both cases point to the same thing: AI amplifies results when humans stay in the loop. Without oversight—it amplifies problems.

But some companies found an approach that worked.

What Actually Worked in 2025

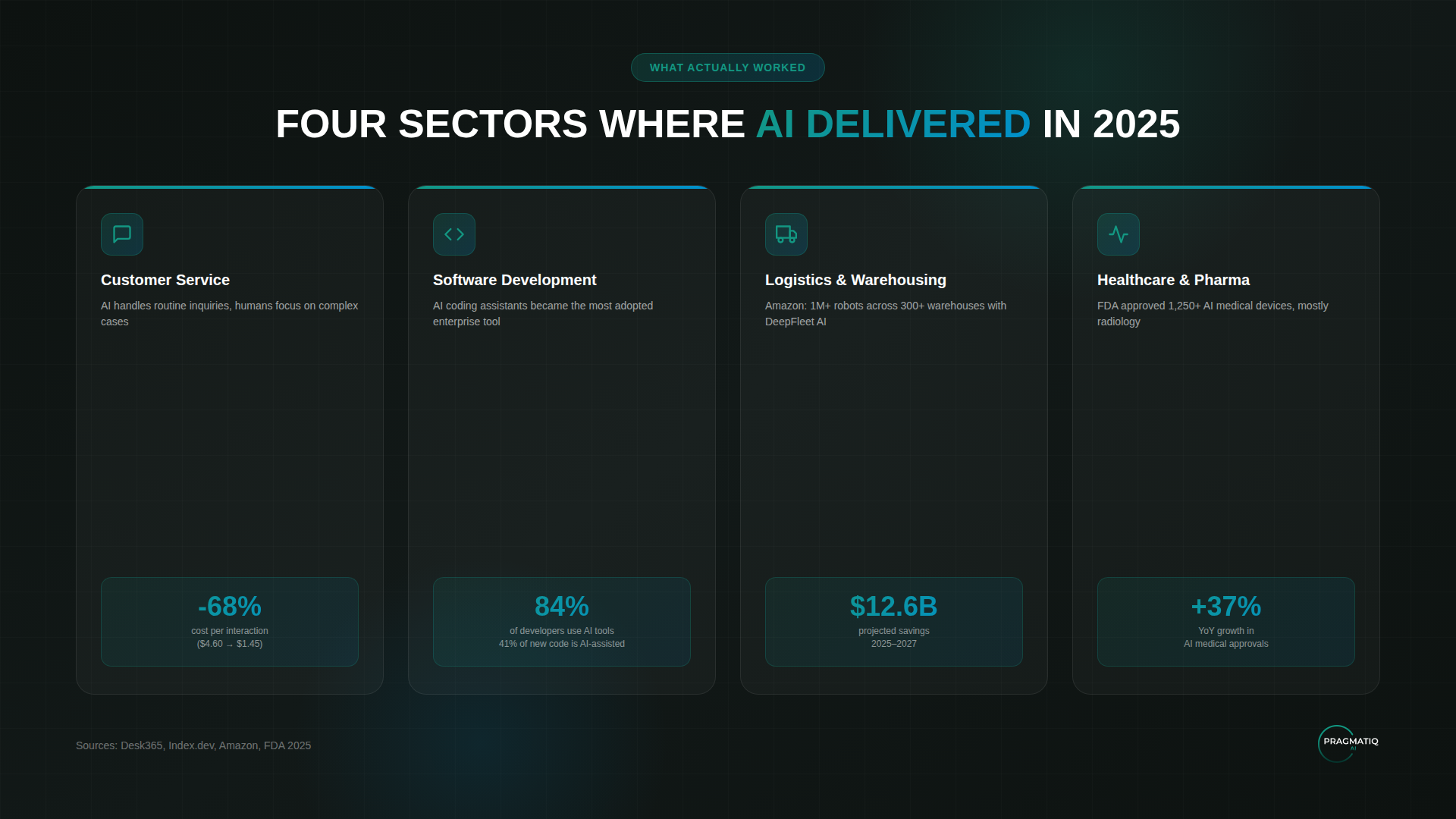

Customer Service

The cost per interaction dropped from $4.60 to $1.45—a 68% reduction. Bank of America processed 2 billion inquiries through its AI assistant Erica with 98% resolution in 44 seconds.

Software Development

AI coding assistants are one of the most successful AI applications in business. 84% of developers already use such tools, and 41% of new code is written with their help.

Logistics and Warehousing

Amazon operates over one million robots across 300+ warehouses. Their DeepFleet AI system improved efficiency by 10%, with projected savings of $12.6 billion for 2025–2027.

Healthcare and Pharma

By mid-2025, the FDA had approved over 1,3000 AI medical devices—a 37% year-over-year increase. Most are in radiology: AI helps detect anomalies in scans.

In drug development—the first clinical results. Relay Therapeutics reported that an AI-developed molecule reduced tumors in 81% of patients in Phase 2 trials.

What do these cases have in common—and how do they differ from the failures?

What Successful Cases Have in Common

- Narrow task—not "implement AI in marketing," but "automate responses to common questions"

- Human in the loop—AI does the groundwork, humans verify and decide

- Quality data—without it, even the best model is useless

- Metrics from day one—not "improve efficiency," but "reduce processing time from 4 hours to 1"

These principles seem simple. But what does their systematic application look like?

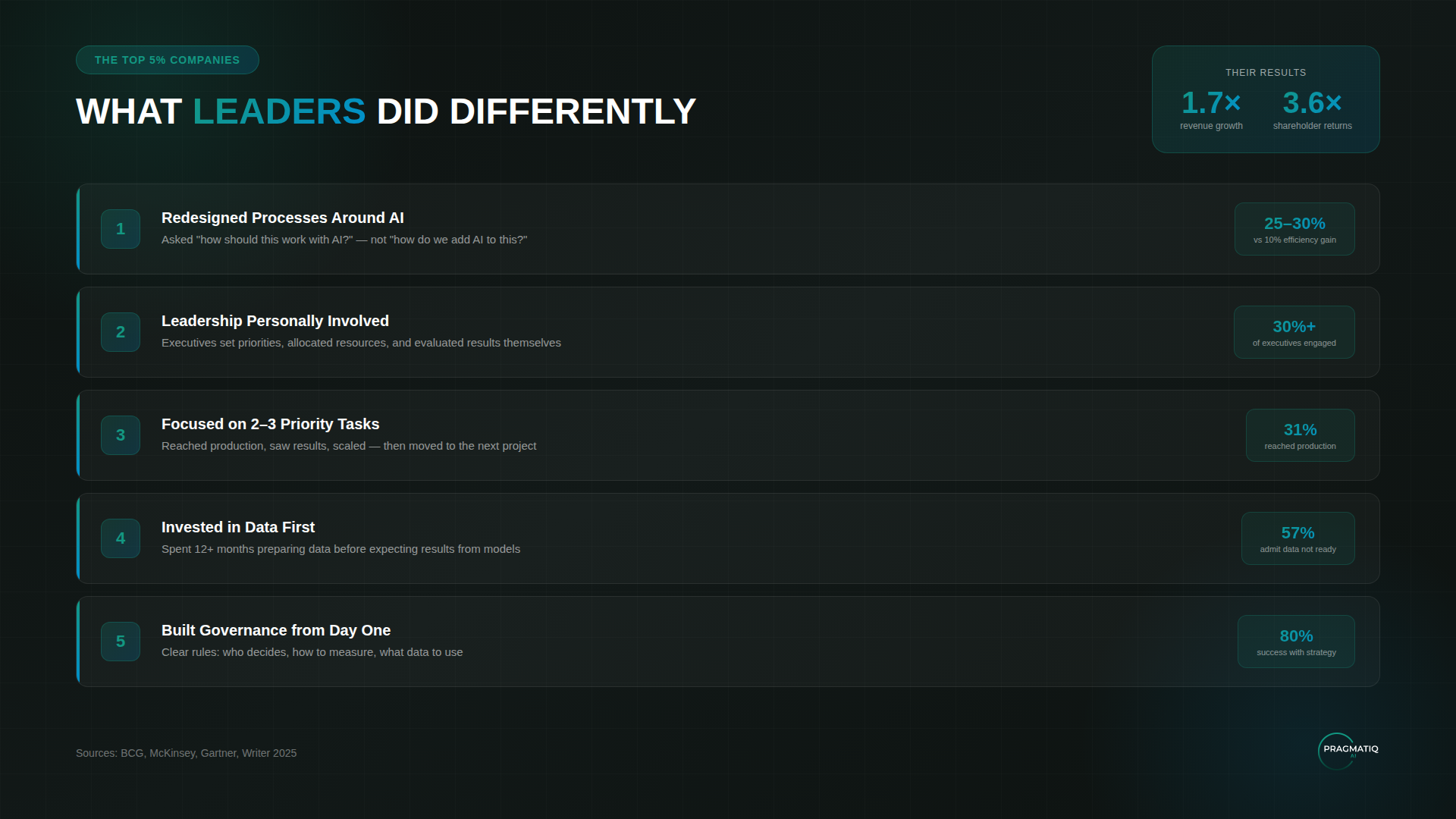

What Sets Apart Companies That Get Results

BCG identified companies that systematically built a foundation for working with AI: processes, data, governance, team training. There were only 5% of them.

Their results:

- 1.7x revenue growth compared to others

- 3.6x shareholder returns

What they did differently:

1. Redesigned Processes, Not Just Added Tools

Leading companies didn't ask "how do we automate what we do." They asked "what should the process look like if AI is part of it from the start."

McKinsey calls this the main success factor. Process redesign delivered 25–30% efficiency gains versus 10% from simply adding AI.

2. Leadership Was Personally Involved

In successful companies, over 30% of executives actively championed AI initiatives. They didn't delegate to IT—they participated themselves in setting priorities, allocating resources, and evaluating results.

3. Focused on 2–3 Tasks, Not Spreading Thin

Instead of multiple pilots—two or three priority cases with clear metrics. Get to production, see results, scale. Only then—the next project.

4. Invested in Data Before Launching AI

57% of companies admit their data isn't ready for AI. Leaders spent 12+ months preparing data—before expecting results from models.

5. Built Governance from Day One

Who decides on AI tools? How is effectiveness measured? What data can be used? Leading companies had answers to these questions before projects even started.

What does all this mean for companies planning implementation in 2026?

What This Means for 2026

At PragmatIQ AI, we see that in 2026, the focus will shift from "Are you using AI?" to "What results is it delivering?"

In our work with clients, this is already evident. Fewer questions about technology—more about measurable impact.

Technology continues to get cheaper: open-source models have nearly caught up with proprietary ones in quality. Access to AI is no longer an advantage in itself. The advantage is knowing how to apply it—in the right processes, with prepared data, and a trained team.

Companies that built this foundation in 2025 will be able to scale their results in 2026.

Where to Start

The main mistake is starting with tool selection. The right start is with clarity: where exactly will AI have an impact, which processes to change first, what needs to be prepared.

At Pragmat AI, we help companies answer these questions before launching projects.

In a free consultation, we will:

- Analyze your processes and identify where AI will bring the most value

- Estimate potential benefits and timelines

- Discuss risks and limitations

- Recommend a training format for your team

- Create a plan with specific steps

After the consultation, you'll have a clear understanding of next steps—whether you decide to work with us or move forward on your own.